#2. Understanding Least-Recently-Used Cache: Part 2.

Implementing LRU Cache in Go with Custom Expiry...

Introduction

Welcome back to part 2 of this series! In the first part of this series, I talked about what a cache was, its real-world applications, and the data structures that make up a Least-Recently-Used (LRU) cache. In case you missed it, here, go take a look as we’ll be building on the concepts explained in the first post: Understanding LRU Cache Part 1.

Heads up, this is a slightly lengthy read, so to get the most out of it, make sure you’re not in a hurry! Enjoy…

LRU Cache operations breakdown

Before we go into the code, I want to breakdown how an LRU cache with a fixed expiry time works. Depending on your needs, you can implement it not to use an expiry, but this article focuses on demonstrating one with that feature.

As I said in my previous post, it’s made up of two major data structures: A Doubly Linked List and a Map.

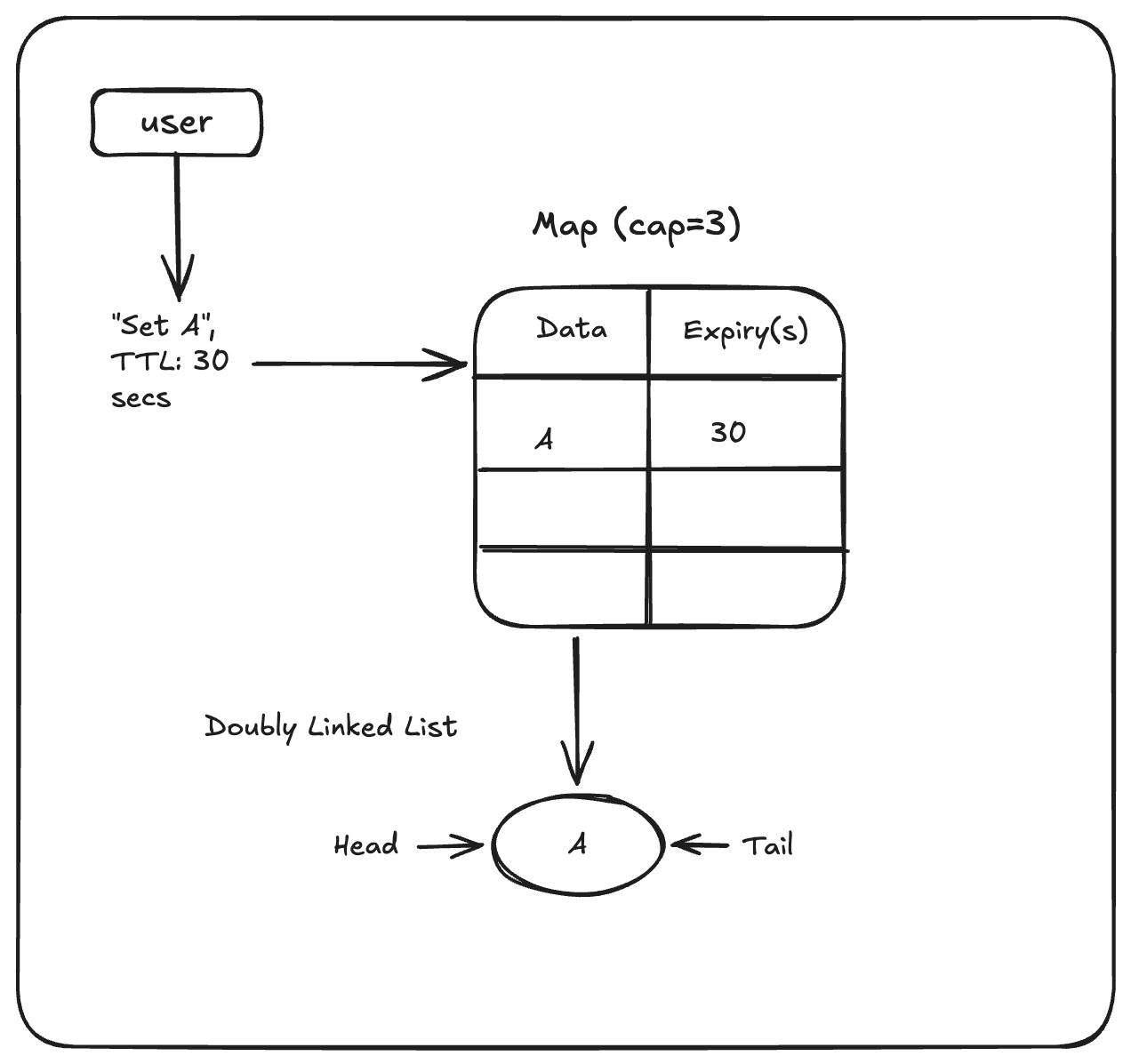

Let’s assume we have an LRU cache with a capacity of 3. Remember, LRU caches have a fixed size. The Map works as the in-memory data store where our elements get set and retrieved while the Doubly Linked List is used for ordering the elements of the cache internally. The DLL determines what elements get prepped for eviction and the ones that get to stay.

When the first element gets added to the cache:

The data and expiry is set on the Map,

It starts out at the Head/Front of our DLL. Because it’s the only element, it’s also the Tail/Back of our DLL.

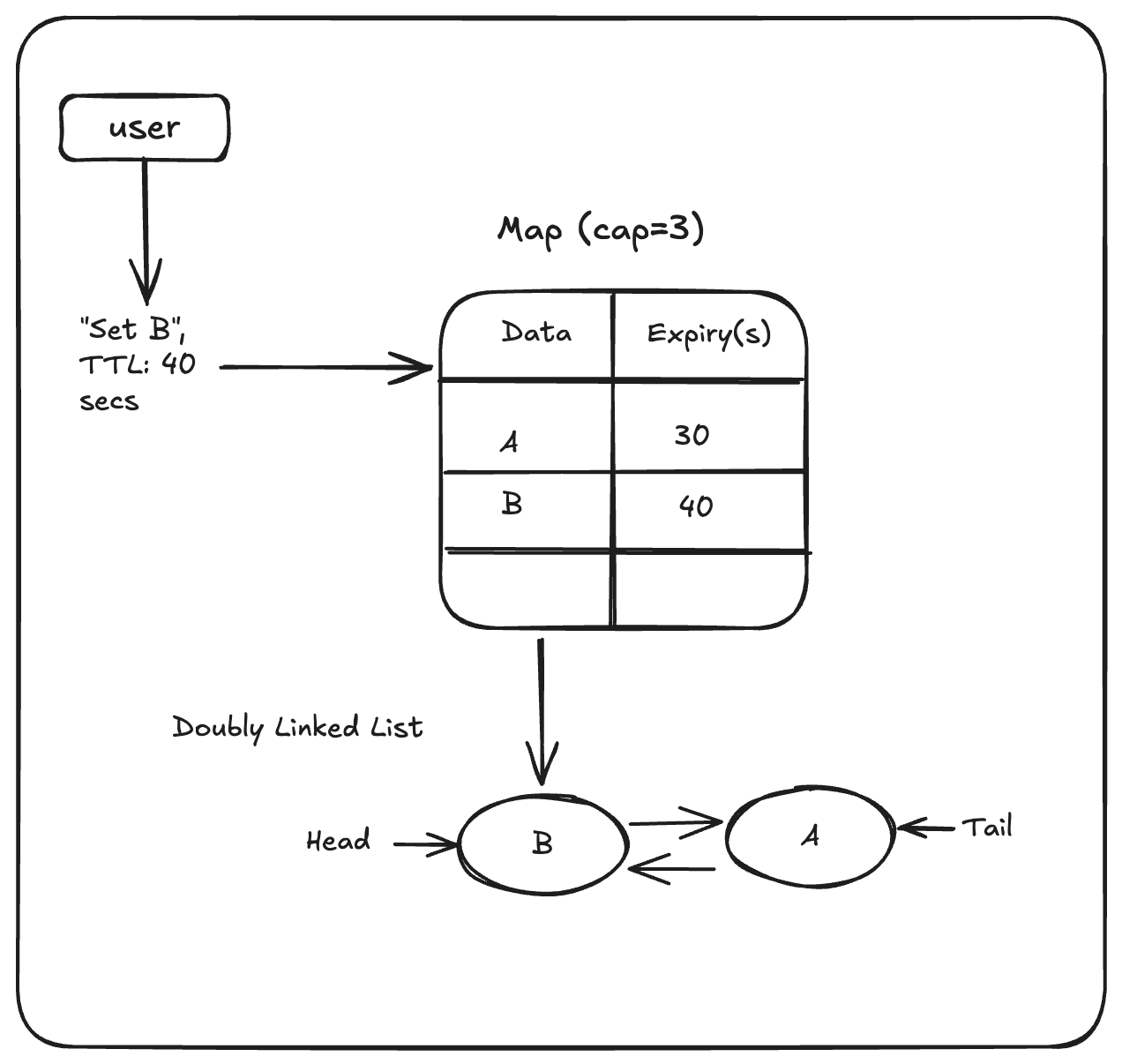

When a second element gets added, it becomes the new Head while the previous element gets moved to the Tail/Back of the DLL.

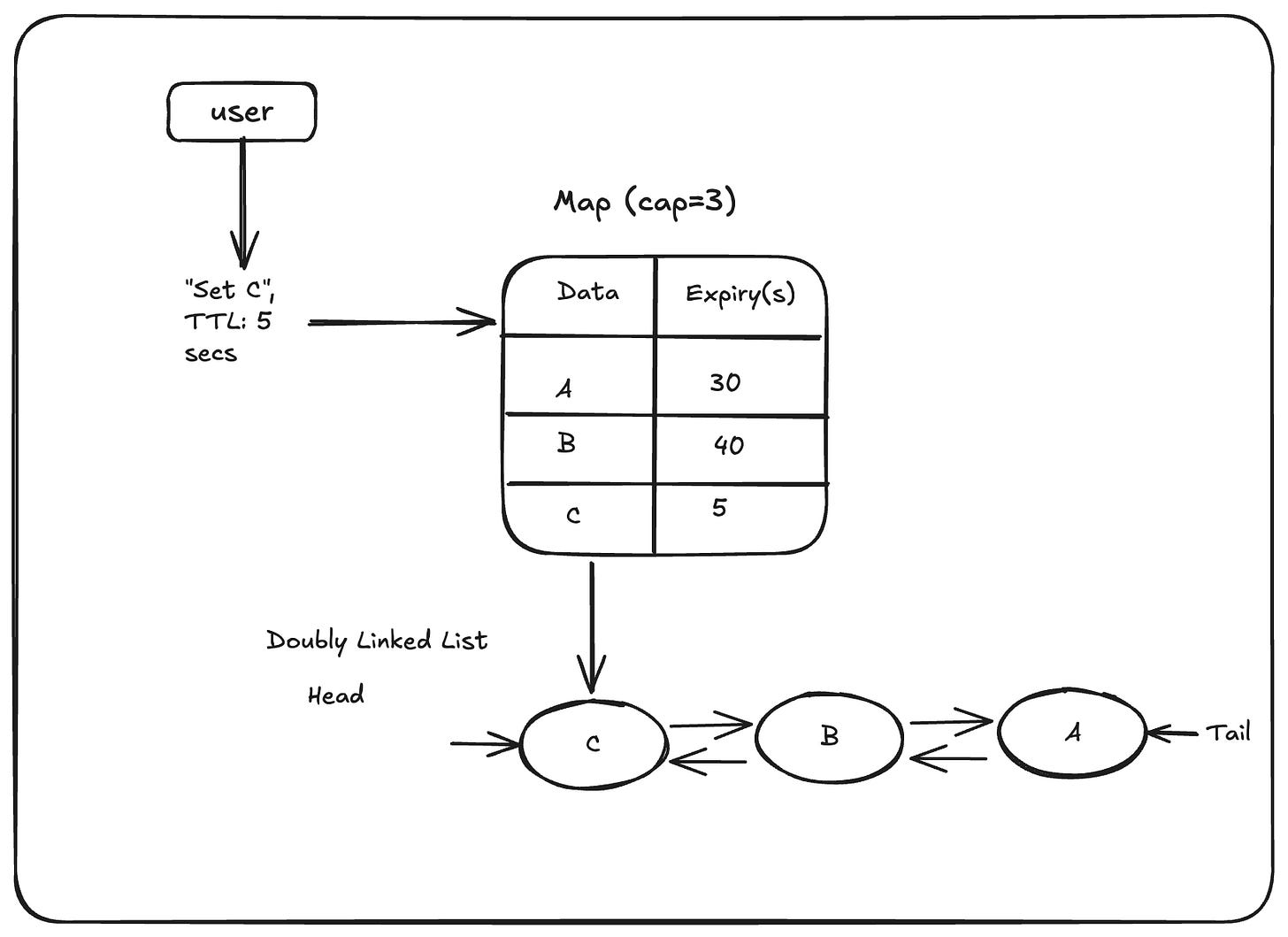

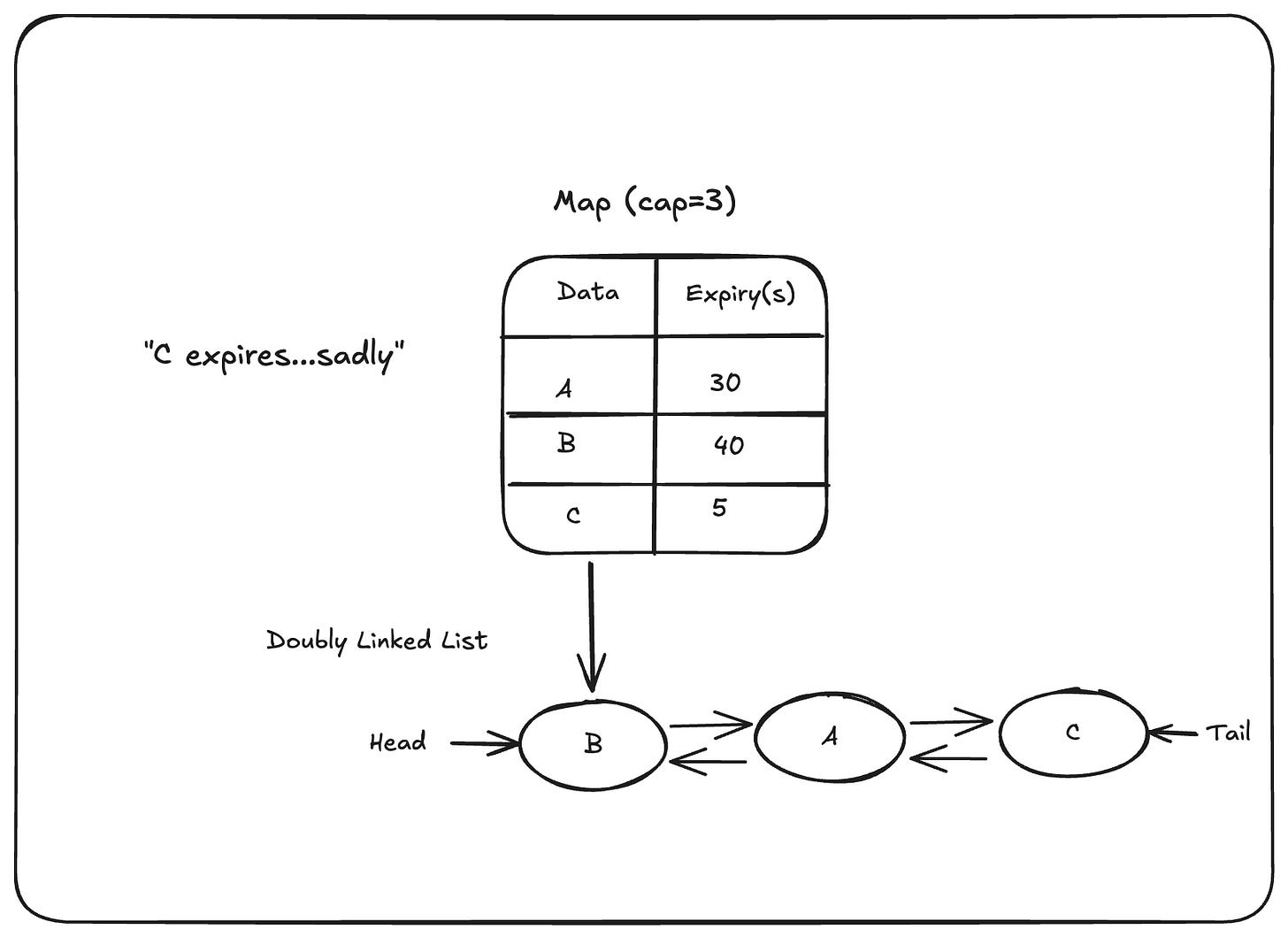

Let’s add another element, C, with an expiry of 5 seconds to our cache. For the purpose of this illustration, we’ll assume C expires before A and B.

Question: Can you guess what would happen when C expires?

Let’s see next illustration to see if you guessed right!

Upon expiry, element C gets moved to the Tail/Back of the DLL. It is designed this way because expired data should logically be evicted first, and treated as a cache miss.

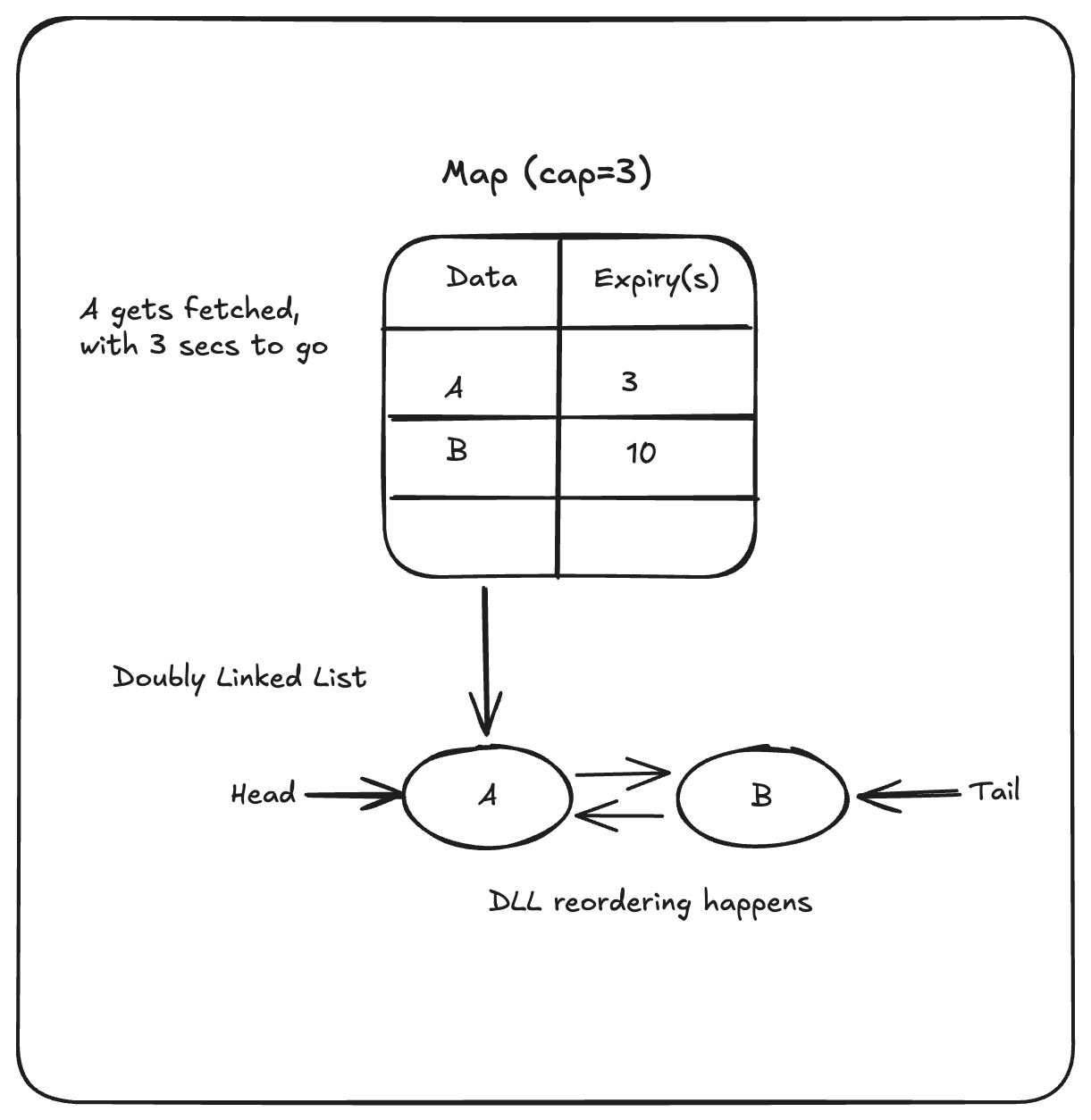

Next Question: In a scenario where element C has been evicted, and element A has about 3 seconds left to expiry but is fetched from the cache, what happens?

I hope you guessed right! With 3 seconds to go, when element A gets fetched, it gets moved to the Head/Front of the DLL, making it the most recently used item in the cache!

There’s a caveat though… If our cache wasn’t designed with an expiry, all would be fine and dandy, A would stay at the Head of the DLL until the next element gets added. However, after the 3 seconds are up, A would be moved again to the Tail of the DLL and then evicted before B (which still has some seconds more left). You could design your cache to set a new expiry TTL once an element becomes the most recently used again, but that’s up to you. For me, I want my expired data evicted!

Implementing LRU Cache in Golang.

TL;DR: If you don’t want/need to follow along and you just want to see the full code implementation (with a slightly different example in its main.go), here you go: LRU-Cache-with-TTL.

For the rest of this article, it is assumed that you have a code editor, and Go is installed on your computer, if not kindly do so, here’s a great guide. It is also assumed that you have basic knowledge of the Go programming language. If not, kindly go here:https://go.dev/tour/welcome/1. Don’t worry I’ll wait for you 🌚.

Open up your code editor, and in your terminal, navigate to your projects folder if you have one, anywhere is fine as long as you’re comfortable with the location. Let’s make a folder and navigate to it:

mkdir lru && cd lruor you could just do this using the GUI.

Ensure your editor is also open on that folder.

Next let’s create two files. The first go file which will hold our LRU cache implementation, and the main file:

touch lru.go main.gofor both files, ensure you have the text package main added to top of the files, else our compiler would complain.

In our lru.go, we add this piece of code:

package main

import (

"container/list"

"fmt"

"log"

"sync"

"time"

)

type Item struct {

key string

value interface{}

expiry time.Time

}

type ItemExpiryCallback func(key string, value interface{})

type cacheOptions struct {

shouldLog bool

expiryCallback ItemExpiryCallback

}

type LRUCache struct {

cacheMap map[string]*list.Element

list *list.List

capacity int

options cacheOptions

sync.RWMutex

}N.B: Don’t worry if the compiler removes the other imports, it’ll be auto-imported as we go along.

Instead of rolling out our own Doubly linked list, we can just use the one provided to us by Golang, it’s in the container package and is known as list. For more information: https://pkg.go.dev/container/list.

Our LRUCache struct is the starting point. Remember we learned earlier that an LRU cache is made up of a map and a doubly linked list. We define the struct and give it the following fields:

cacheMap map[string]*list.Elementthis is a map(or object for those of you with a JS background) that holds key-value pairs where the keys must be strings and the values must hold pointers to elements of a Doubly linked list. *list.Element will point to a struct that holds a Value field, for more info on this: https://pkg.go.dev/container/list

list *list.List

capacity intthe list field is a pointer to the Doubly Linked List that Go provides us. This list comes with a bunch of utility functions that enable us perform CRUD operations on the list. The next field, capacity, will hold the size of the cache itself. An LRU cache has a fixed size, depending on your needs, it could be 1000, or 100, it’s up to you.

package main

import (

...

"sync"

...

)

type ItemExpiryCallback func(key string, value interface{})

type cacheOptions struct {

shouldLog bool

expiryCallback ItemExpiryCallback

}

options cacheOptions

sync.RWMutexThe last two fields of the LRUCache struct contain our options which is defined by a custom struct called cacheOptions as shown above. These options allow us to control the behaviour of the cache. Properties:

shouldLog: Should logging operations occur when things happen within the cache?

expiryCallback: This enables the caller of our cache to define operations that should happen when an item on the cache is expired. It is of the type

ItemExpiryCallback,a function that takes a key, and a value, these parameters refer to properties of the expired item.

Lastly, for the LRUCache struct, we have the sync.RWMutex field. This is a reader-writer mutex(mutual exclusion lock). It is a synchronization primitive that enables go routines to safely write to and read from maps in Go, multiple go routines can read from a map while only one can write to the map per operation. For more on this: https://pkg.go.dev/sync#RWMutex.

type Item struct {

key string

value interface{}

expiry time.Time

}Last on our list of data structures needed for this Cache implementation is the Item struct, which holds the properties of a cache item. Every item must have a key of type string, a value that can hold any data type(we want our cache to have the ability to hold an arbitrary number of data types), and of course, an expiry time.

Now that we’ve defined the properties of our LRU cache, lets move on to behaviour. I’ll break down the operations we need to implement so you can have an overview of how it behaves, we want:

A construtor function that gives us new instances of our LRU cache,

A cache method that enables us to SET new key-value pairs on the cache with fixed expiry,

A cache method that enables us to GET cache items, expired items will result in a cache miss,

utility cache methods for removing tail elements of cache items and also for printing the list of cache items to the console.

Constructor function

For our constructor function, we want every new cache instance to be configured based on capacity and cacheOptions that we would pass to it. It should either return a pointer to the cache instance or nil.

func NewLRUCacheWithTTL(capacity int, options cacheOptions) *LRUCache {

if capacity <= 0 {

return nil

}

return &LRUCache{

capacity: capacity,

list: list.New(),

options: options,

cacheMap: make(map[string]*list.Element),

}

}For the rest of the cache, we:

initialize a new doubly linked list and assign it to

list,use the Go’s

makefunction to initialize a map of string to list elements.

The constructor returns nil if we pass it a non-positive capacity.

Cache Set Method

Set is a method defined on the LRUCache with a pointer reciever. It accepts the key, value, and expiry(called ttl) as inputs and returns a boolean data type signifying if the item was set successfully.

Firstly, we restrict write access to the cache by calling the

Lock()method. We’re able to do this because we embedded sync.RWMutex struct into our cache. Then we free the lock at the end of the operation using the defer keyword.

// Set sets a new key and value into the cache with a TTL option.

// It returns true if a new element has been created.

// TTL - time-to-live

func (lru *LRUCache) Set(key string, value interface{}, ttl time.Duration) bool {

lru.Lock()

defer lru.Unlock()

...

}Next, we define our expiry time by adding the ttl to the current time. So if our ttl is 30 seconds, our expiry will be the current time + 30 seconds. Then we use the AfterFunc() method on Go’s time package to define a function that will run after the expiry time is reached. This method will run in a separate go routine.

expiry := time.Now().Add(ttl) // func() is set to run after the ttl set for a cache entry // It moves the list element to the tail of the doubly linked list // It will be removed at the next lru.Set Operation where the capacity of the cache is reached time.AfterFunc(ttl, func() { if lru.options.shouldLog { log.Printf("Expiring key: %s", key) } lru.Lock() defer lru.Unlock() if found, ok := lru.cacheMap[key]; ok { item := found.Value.(*Item) if time.Now().After(item.expiry) { lru.list.MoveToBack(found) } if lru.options.shouldLog { log.Printf("Item moved to tail of list") } } })

The anonymous function safely reads the item from the cache, then it checks if it’s expired. If it is, we move the element to the Tail/Back of the DLL.

Next, we want to check if a key exists in the cache already before making the update. If it does, we simply update the value and expiry that was passed in. This would be equivalent to a database update query.

if foundItem, ok := lru.cacheMap[key]; ok { item := foundItem.Value.(*Item) item.value = value item.expiry = expiry lru.list.MoveToFront(foundItem) if lru.options.shouldLog { log.Printf("Item %s moved to front of list", key) lru.printList() } return true }

Now that we’ve treated the scenario where a key might exist, that leaves us with the path where the item creates a new cache row. We create a new item, add it to the Head/Front of the list, and update the Map with the newly added list element. Then we check if our cache is at capacity, if it is, we ensure to remove the tail/last element from the cache using the removeLastElement unexpected function. We’ll talk about what that’s doing under the hood later.

item := &Item{

key: key,

value: value,

expiry: expiry,

}

elem := l.list.PushFront(item)

l.keyMap[key] = elem

if l.list.Len() > l.size {

l.removeLastElement()

}

if l.opts.withLogs {

log.Printf("Elem %s added to the front", key)

l.printList()

}

return trueAnd we’re done with the Set operation, here’s the full code:

// Set sets a new key and value into the cache with a TTL option.

// It returns true if a new element has been created.

// TTL - time-to-live

func (lru *LRUCache) Set(key string, value interface{}, ttl time.Duration) bool {

lru.Lock()

defer lru.Unlock()

expiry := time.Now().Add(ttl)

// func() is set to run after the ttl set for a cache entry

// It moves the list element to the tail of the doubly linked list

// It will be removed at the next lru.Set Operation where the capacity of the cache is reached

time.AfterFunc(ttl, func() {

if lru.options.shouldLog {

log.Printf("Expiring key: %s", key)

}

lru.Lock()

defer lru.Unlock()

if found, ok := lru.cacheMap[key]; ok {

item := found.Value.(*Item)

if time.Now().After(item.expiry) {

lru.list.MoveToBack(found)

}

if lru.options.shouldLog {

log.Printf("Item moved to tail of list")

}

}

})

if foundItem, ok := lru.cacheMap[key]; ok {

item := foundItem.Value.(*Item)

item.value = value

item.expiry = expiry

lru.list.MoveToFront(foundItem)

if lru.options.shouldLog {

log.Printf("Item %s moved to front of list", key)

lru.printList()

}

return true

}

item := &Item{

key: key,

value: value,

expiry: expiry,

}

e := lru.list.PushFront(item)

lru.cacheMap[key] = e

if lru.list.Len() > lru.capacity {

lru.removeTailElement()

}

if lru.options.shouldLog {

log.Printf("%s item added to front of list", key)

lru.printList()

}

return true

}Cache Get Method

The Get method accepts a single key as an input and returns two values, the value of the key provided to it, and a boolean value expressing whether the item requested is expired.

// Get gets by key and returns the value and if the entry is expired.

// If expired it is moved to the back of the list else it gets

// moved to front as the most recently used

func (lru *LRUCache) Get(key string) (value interface{}, expired bool) {

lru.RLock()

defer lru.RUnlock()

if found, ok := lru.cacheMap[key]; ok {

item := found.Value.(*Item)

//treat expired data as cache miss

expired := time.Now().After(item.expiry)

if expired {

return nil, expired

}

lru.list.MoveToFront(found)

return item.value, expired

}

return nil, true

}

It obtains a reader lock in order to safely read from the cache. If the item is found, we check for expiry first. If the item is expired, we treat it as a cache miss because we don’t want our cache returning stale data. Remember that at the point of expiry, the item would be moved to the tail/back of the list. However, if the item is found, we move it to the Head/Front of the list, then we return the value and the expiry boolean (it would be false in this case).

Other Cache Metods: removeTailElement, removeElement, and printList.

removeTailElement

In the Set method above, you may have seen the use of a method: lru.removeTailElement().This method helps to remove the least-recently-used element in a list when our cache is at capacity. Here’s how it’s defined:

// removeTailElement removes the tail element of the list and deletes it from the cache

func (lru *LRUCache) removeTailElement() {

lru.removeElement(lru.list.Back())

}It simply calls another method removeElement, and passes the tail element of the list to it. Back() is a utility method provided by the underlying list implementation from Go.

removeElement

This method is responsible for removing the element of a list item, deleting it from the cache, and calling the expiryCallback on an item if one is set.

// removeElement removes an element of the referenced doubly linked list

// It also deletes the item from the cacheMap and calls the itemExpiryCallback

func (lru *LRUCache) removeElement(item *list.Element) {

if item == nil {

return

}

lru.list.Remove(item)

found := item.Value.(*Item)

delete(lru.cacheMap, found.key)

if lru.options.shouldLog {

log.Printf("removing item...key:%v, value:%v, exp:%v", found.key, found.value, found.expiry)

lru.printList()

}

if lru.options.expiryCallback != nil {

lru.options.expiryCallback(found.key, found.value)

}

}printList

This is the last method on our list, and all it does is show us the elements in our Doubly linked list for ease of tracking.

// printList logs all the elements of the doubly linked list from head to tail

func (lru *LRUCache) printList() {

for p := lru.list.Front(); p != nil; p = p.Next() {

item := p.Value.(*Item)

fmt.Printf("key: %s, value: %v \n", item.key, item.value)

}

}Testing our code…

Phew! If you’ve made it this far I applaud you. It’s time to see if our implementation does what we expect it to do.

In our main.go, let’s update it with this logic:

package main

import (

"fmt"

"log"

"time"

)

func expCallback(key string, value interface{}) {

log.Printf("CB::key %v with value: %v removed from cache", key, value)

}

func main() {

fmt.Println("Inititating new LRUCache...")

cache := NewLRUCacheWithTTL(3, cacheOptions{

shouldLog: true,

expiryCallback: expCallback,

})

cache.Set("A", 1, time.Duration(30*time.Second))

cache.Set("B", 2, time.Duration(40*time.Second))

cache.Set("C", 3, time.Duration(5*time.Second))

time.Sleep(5 * time.Second)

value, isExpired := cache.Get("C")

fmt.Printf("value:%d, isExpired:%v\n", value, isExpired)

}

In our main function, we initialize a cache with a capacity of 3. Then, like our example above, we update our cache with three items, A, B, and C with 30, 40, and 5 seconds respectively. Then, we make our program idle for 5 seconds to let the go routines finish, and also to see if C gets evicted successfully. Let’s run and see!

In your terminal, run:

go run .Expected Console output:

Inititating new LRUCache...

2026/03/27 07:27:28 A item added to front of list

key: A, value: 100

2026/03/27 07:27:28 B item added to front of list

key: B, value: 100

key: A, value: 100

2026/03/27 07:27:28 C item added to front of list

key: C, value: 100

key: B, value: 100

key: A, value: 100

value:%!d(<nil>), isExpired:trueAs expected, for each item added, we get a log message with a timestamp of when it was added ('cause we enabled logging). Then, using the internal printList() method, we see how each element gets added, and displaced upon the new addition of an element. A starts at the Head/Front of the list and with a new addition, gets moved to the tail. B starts at the Head as well, then gets moved to the next node when C is added.

Furthermore, we see that after waiting for 5 seconds and attempting to fetch C from the cache, we get a cache miss!

What about when we add 4 items (which is greater than the configured capacity) before any item expires?

func main() {

fmt.Println("Inititating new LRUCache...")

cache := NewLRUCacheWithTTL(3, cacheOptions{

shouldLog: true,

expiryCallback: expCallback,

})

cache.Set("A", 1, time.Duration(30*time.Second))

cache.Set("B", 2, time.Duration(40*time.Second))

cache.Set("C", 3, time.Duration(30*time.Second))

time.Sleep(1 * time.Second)

cache.Set("D", 4, time.Duration(10*time.Second))

} using the same command, we run the program…

Expected Console output:

Inititating new LRUCache...

2026/03/27 07:54:55 A item added to front of list

key: A, value: 1

2026/03/27 07:54:55 B item added to front of list

key: B, value: 2

key: A, value: 1

2026/03/27 07:54:55 C item added to front of list

key: C, value: 3

key: B, value: 2

key: A, value: 1

2026/03/27 07:54:56 removing item...key:A, value:1, exp:2026-03-27 07:55:25.518956 +0100 WAT m=+30.000159459

key: D, value: 4

key: C, value: 3

key: B, value: 2

2026/03/27 07:54:56 CB::key A with value: 1 removed from cache

2026/03/27 07:54:56 D item added to front of list

key: D, value: 4

key: C, value: 3

key: B, value: 2Let’s break down what’s happening above:

A gets added to our map, and our list, becoming the head of the list.

B gets added to the map and the list, it displaces A to become the head of the list. The same happens for C.

When element D gets added to the cache, something different happens:

2026/03/27 07:54:56 removing item...key:A, value:1, exp:2026-03-27 07:55:25.518956 +0100 WAT m=+30.000159459

We get a log to the console informing us that the first element we added, A, is getting evicted from the cache. This line is triggered from within the Set method of our lru.go file:

if lru.list.Len() > lru.capacity {

lru.removeTailElement()

}Before completing the insertion, we check that the capacity of the cache hasn’t been exceeded. If it has, we kick the least used element, which is A. Also notice that the expiry had not been reached before it was removed. It was removed at 07:54:56, but expiry was exp: 07:55:25.518956.

We’ve come to the end of our learning session, I hope this series was insightful. Kindly play around with the code, using different scenarios to really understand how the operation works. All thoughts and feedback are welcome!